Ratproxy is a semi-automated, largely passive web application security audit tool, optimized for an accurate and sensitive detection, and automatic annotation, of potential problems and security-relevant design patterns based on the observation of existing, user-initiated traffic in complex web 2.0 environments.

Ratproxy also detects and prioritizes broad classes of security problems, such as dynamic cross-site trust model considerations, script inclusion issues, content serving problems, insufficient XSRF and XSS defenses, and much more.

Ratproxy is a local program designed to sit between your Web browser and the application you want to test. It logs outgoing requests and responses from the application, and can generate its own modified transactions to determine how an application responds to common attacks.

The list of low-level tests it runs is extensive, and includes:

potentially unsafe JSON-like responses

bad caching headers on sensitive content

suspicious cross-domain trust relationships

queries with insufficient XSRF defenses

suspected or confirmed XSS and data injection vectors

Installation:

OpenSuSe user can install Ratproxy using "1-click" installer -

here

Running Ratproxy:

# ratproxy -v /tmp/ -w ratlog.txt -d hell.com -lfscm

ratproxy version 1.58-beta by

[*] Proxy configured successfully. Have fun, and please do not be evil.

[+] Accepting connections on port 8080/tcp (local only)...

-d parameter tells ratproxy to run tests only on URLs at the specified domain, so it won't accidentally test a site your application links to for images or advertising.

-v parameter tells it where to write trace files.

-w parameter indicates where log records should be written.

Once the proxy is running, you need to configure your web browser to point to the appropriate machine and port (

8080) it is advisable to close any non-essential browser windows and purge browser cache, as to maximize coverage and minimize noise.

The next step is to open the tested service in your browser, log in if necessary, then interact with it in a regular, reasonably exhaustive manner: try all available views, features, upload and download files, add and delete data, and so forth - then log out gracefully and terminate ratproxy with

Ctrl-C.

Generating Ratproxy Report:

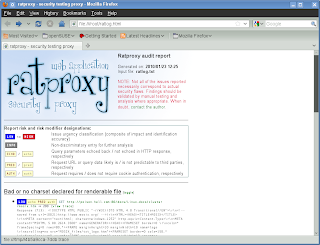

Once the proxy is terminated, you may further process its pipe-delimited (|), machine-readable, greppable output with third party tools if so desired, then generate a human-readable HTML report:

# ratproxy-report.sh ratproxy.log >report.html

This will produce an annotated, prioritized report with all the identified issues.